I have already done a similar test with an R9 3950X (Zen 2) last year. Since the results differ fundamentally, I decided to repeat the test and create a small article.

The basic structure of the current Zen 3 architecture should be sufficiently known, otherwise Google helps in finding suitable info.

Methodology

The R9 5900X with fixed 4.5GHz core clock was used. The CPU was configured via the UEFI as follows. The goal here was that exactly 4 cores are active. The game selection includes titles that use a lot of threads.

- configuration: CC0 enabled + CCD1 disabled, 4-0, IF 1900Mhz

- configuration: CC0 enabled + CCD1 enabled, 2-2, IF 1900Mhz

- configuration: CC0 enabled + CCD1 disabled, 4-0, IF 1333Mhz

- configuration: CC0 enabled + CCD1 enabled, 2-2, IF 1333Mhz

The purpose of the 2-2 core config is to force higher intercore latencies, since only a maximum of 4 threads incl. SMT can be executed on one CCD.

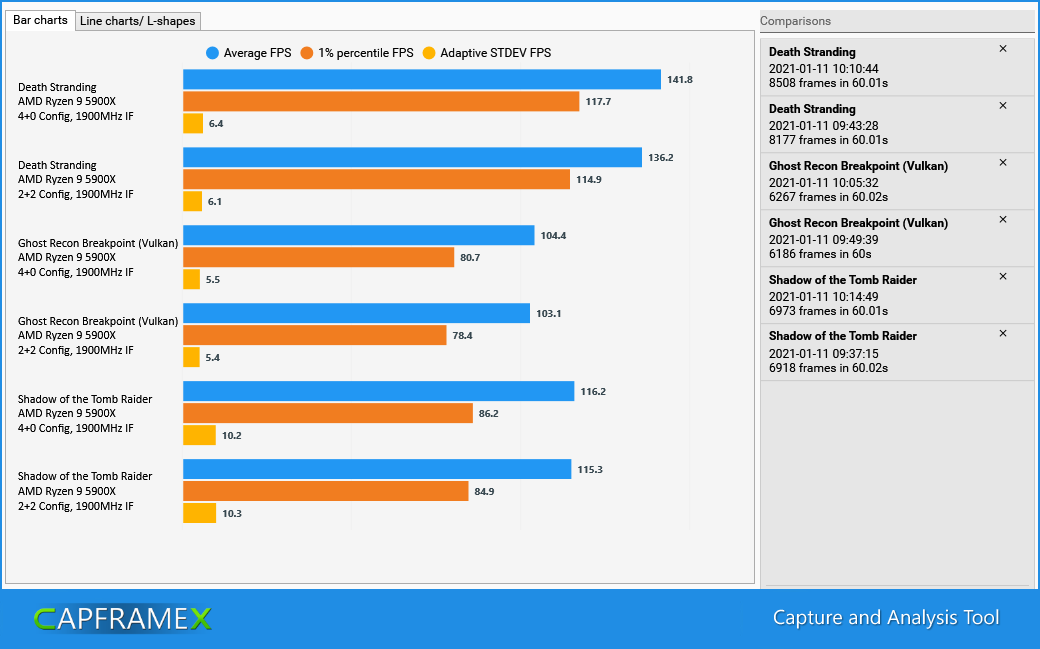

Results 1900Mhz IF clock

Only Death Stranding shows significant differences. Could it be that the overclocked IF is no longer a bottleneck? Therefore, another run with a significantly lower IF clock was made.

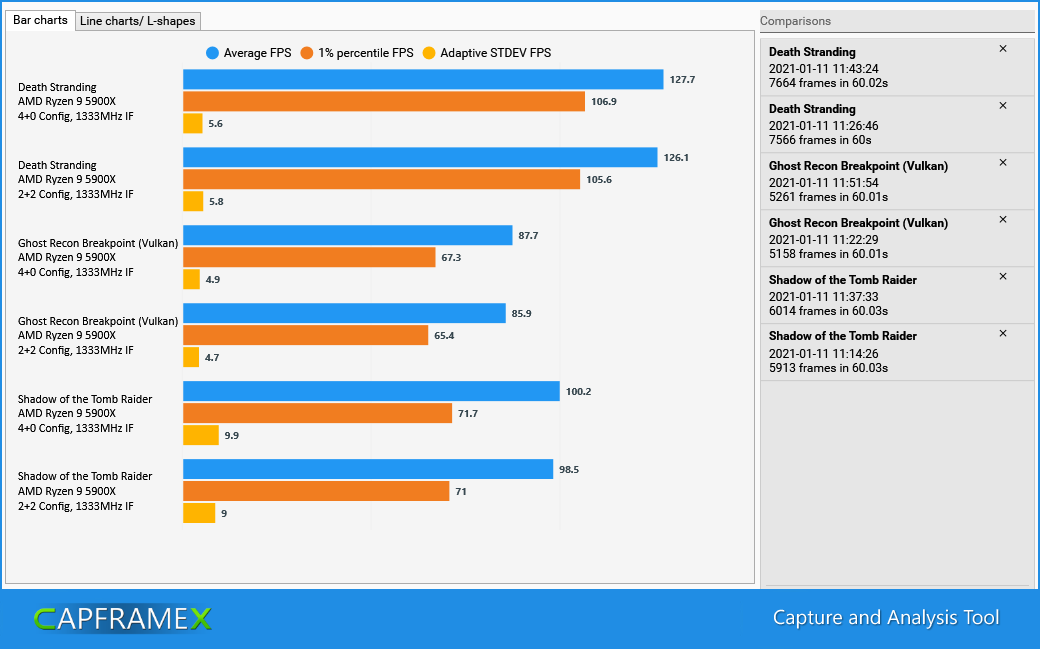

Results 1333Mhz IF clock rate

But even in this case, the differences are marginal. The Death Stranding results with the overclocked IF almost seem to be an outlier. Purely logically, a difference, if any exists, should tend to increase with a slower IF.

Intercore latencies with SiSoftware Sandra

To cross-check the configurations, I ran the SiSoftware Sandra multi-core efficiency test. In fact, the numbers reflect the communication over the I/O die.

- 4-0, IF 1900Mhz: inter CCD ~27ns

- 2-2, IF 1900Mhz: inter CCD ~27ns, intra CCD ~61ns

- 4-0, IF 1333Mhz: inter CCD ~27ns

- 2-2, IF 1333Mhz: inter CCD ~27ns, intra CCD ~77ns

Surprised but skeptical....

The results are surprising. A 2-2 config should actually force communication over the IF. How can the very small differences be explained? Either the new Windows scheduler works "miracles" or intercore latency doesn't play as big a role in gaming workloads as one would have suspected.