Authors: devtechprofile and user "blautemple" from PCGHX forum

Since AMD has the advantage of a better process node on its side, there is sometimes the thought that Intel CPUs might consume an excessive amount of energy and get hot. Among other things, this is reinforced by the fact that Intel has pushed the clock limits further and further and also raised the TDP. The current mainstream top model, the i9-10900K, boosts up to 5.3GHz with TVB and is allowed to consume 250 watts within the PL2 (maximum allowed power consumption), although this is short-term at 56 seconds (Tau) and depends on other factors like cooling or the specific implementation of the motherboard manufacturer.

How efficient are the current top models from AMD and Intel in reality? We looked into this question and restricted ourselves to gaming workloads. The results were quite surprising and are far beyond the expectations we had at the beginning of this article.

A note on text comprehension in advance. It is important that the reader not only studies the graphs, but also reads the accompanying text. This is always important, of course, but this time we explicitly point it out because the texts in this article are essential in order to be able to interpret the data correctly.

How do we measure efficiency?

We calculate the efficiency from the quotient of the average frame rate and the package power. The unit is therefore FPS/Watt. The average frame rate is reliably determined by CapframeX on the basis of the frame times, as usual. But what about the package power? This is a sensor value that is read out via the MSR interface of the CPU. The CPU in turn uses sensors of the mainboard. The question of accuracy is not easy to answer. We put the data from CapFrameX against an external energy meter. Using efficiency curves for the power supply and the motherboard's voltage converters, we found a maximum difference of 2-3% for the Intel and 5% for the AMD system. These are expected errors that do not fundamentally jeopardize an efficiency analysis, but it should not go unnoticed that such sensor values can never reach the accuracy of correct electro-technical methods practiced, for example, by Igor Wallossek (Igor's LAB).

We have tested three different resolutions. 720p is supposed to maximize the load on the CPU. Additionally, we tested the more practical resolutions 1440p and 2160p to examine how CPUs behave when the load is shifted towards the graphics card. Critically, it is then no longer CPU efficiency that we’re talking about as the graphics card limits it. Nevertheless, it is exactly this point that is interesting: how does the boost algorithm behave when the CPU's performance fades in the background?

Test systems

The test systems are tested both according to the specification (the Intel CPU, however, with 3200MT/s RAM) and "optimized". The optimized profile means in both cases that the CPUs are underclocked to 4.5GHz. The core voltage is reduced as much as possible. Stability is the decisive criterion here. It is important that the voltage between the two CPUs is not comparable, since they are fundamentally different in terms of architecture as well as manufacturing. Under load, the voltage drops and the greater the load, the more it drops. This is due to the so-called Vdroop. In this way, the motherboard tries to prevent strong voltage peaks during load changes. Even a core voltage of 1.15V didn't allow stable operation in the 5900X. HWiNFO reported a voltage drop of under 1.1V under load, which led to system crashes. With the Intel system, the voltage could be lowered to 1.08V, but under heavy load the voltage drops to about 1V. The system was tested for stability using Prime95 including AVX2 load.

Since two different models (Asus Strix and TUF) of the RTX 3090 were used in the test, we ran them at 1800MHz clock and 0.825V voltage to ensure that the power limit of the TUF was sufficient. The memory was left at a standard 9750MHz. This approach proved to be successful as the clock rates were always identical.

Intel

Stock

CPU: i9-10900K, PL1=default, PL2=default

RAM: 3200MT/s (C16-16-36-2T)

Mainboard: Asus Z490 Maximus XII Hero

Graphics Card: RTX 3090@UV 1800MHz 0.825V

OC

CPU: i9-10900K, 4.5GHz core clock, 1.08V core voltage

RAM: 4266MT/s (C16-17-17-37-2T)

Mainboard: Asus Z490 Maximus XII Hero

Graphics card: RTX 3090@UV 1800MHz 0.825V

AMD

Stock

CPU: R9 5900X

RAM: 3200MT/s (C16-16-36-2T)

Mainboard: Gigabyte X570 Aorus Master

Graphics Card: RTX 3090@UV 1800MHz 0.825V

OC

CPU: R9 5900X, 4.5GHz core clock, 1.2V core voltage

RAM: 3733MT/s (C16-16-36-1T)

Mainboard: Gigabyte X570 Aorus Master

Graphics card: RTX 3090@UV 1800MHz 0.825V

Benchmark suite

We based our game selection on the PCGH's benchmark suite for CPUs and GPUs, but did not go through them completely. The (mini) suite should be manageable, but also well diversified. Games with high and normal CPU loads are included. We included Cyberpunk 2077 as a bonus title due to its popularity. The 720p tests were performed with the CPU settings and CPU scenes. The 1440p and 2160p tests, on the other hand, were performed with the GPU settings, but also the CPU scenes. This way, the limit could be shifted towards the GPU as far as possible, but a high CPU load could be maintained at the same time.

- Death Stranding

- F1 2020

- Ghost Recon Breakpoint

- Shadow of the Tomb Raider

- Star Wars Jedi: Fallen Order

- Cyberpunk 2077

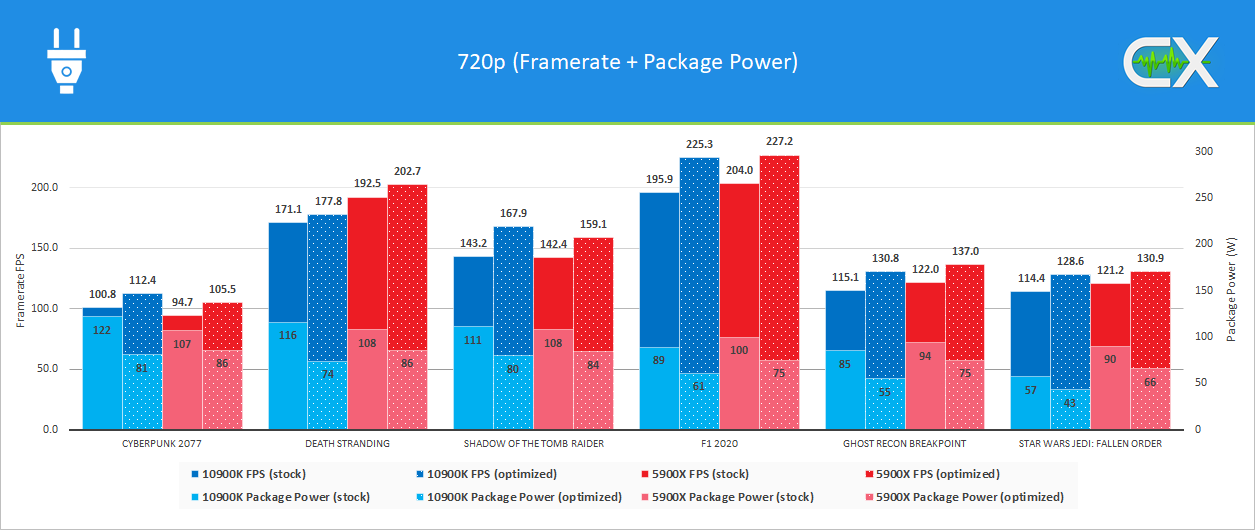

Performance and power consumption (720p)

720p is the scenario with the highest load for the CPU. The settings are chosen to maximize the drawcalls. The results are grouped by the games. You can see that the performance increases significantly with the optimized profiles, but at the same time the consumption also decreases significantly.

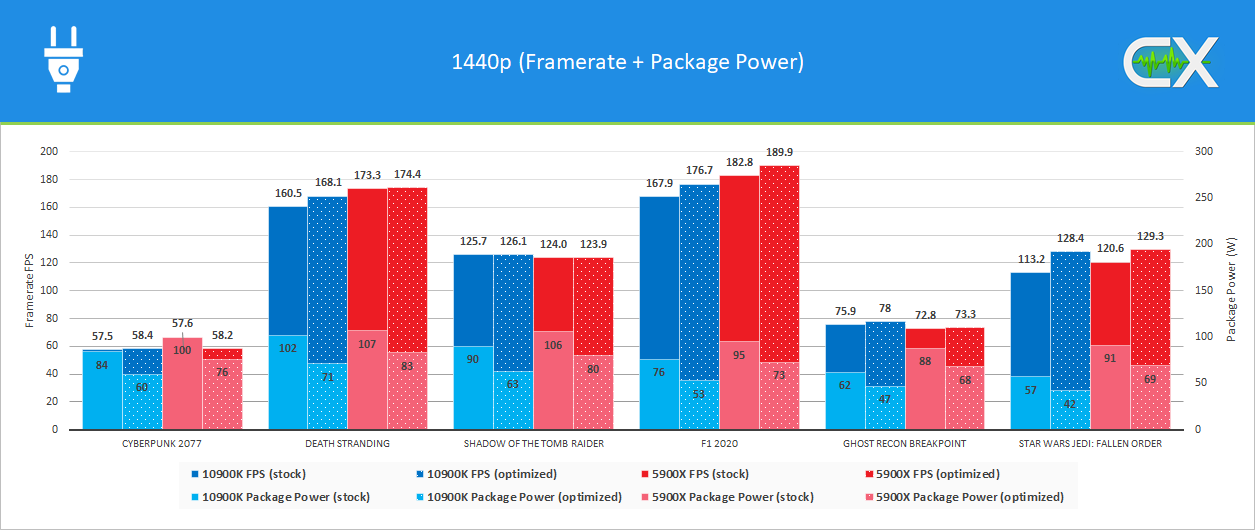

Performance and power consumption (1440p)

In 1440p, there is already a GPU limit for the most part, since in addition to the resolution, the settings have also been raised further to ensure that the load on the CPU is reduced. Nevertheless, the optimized profile shows partly significant performance increases. The GPU limit is not as pronounced as in the 2160p test. Only Death Stranding still shows a slight limit and Star Wars is completely CPU limited.

Important note: The graphic has two y-axes to be able to capture the package power as well. The scaling leads to the fact that the bars for the package power partly overlap the FPS bars in Cyberpunk 2077. The circumstance is made clear via a small vertical line above the bars. In this case, you should pay attention to the labels/numbers and not to the bar height.

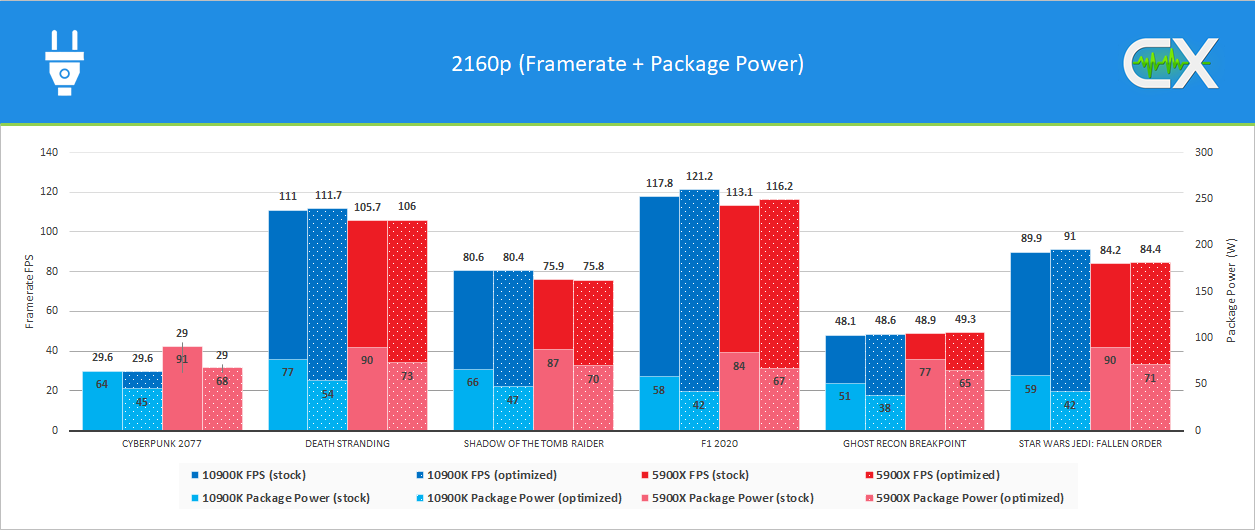

Performance and power consumption (2160p)

As expected, 2160p causes the highest load for the graphics card and accordingly the differences between the i9-10900K and R9 5900X turn out to be the smallest. Despite the significantly lower load for the CPU, significant differences are seen in the package power. Compared to 720p, the power consumption of the Intel CPU drops more than that of AMD's opponent.

Important note: See 1440p scenario.

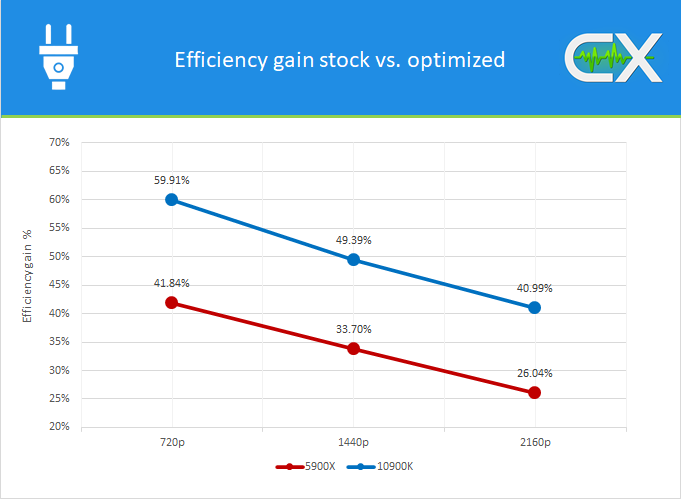

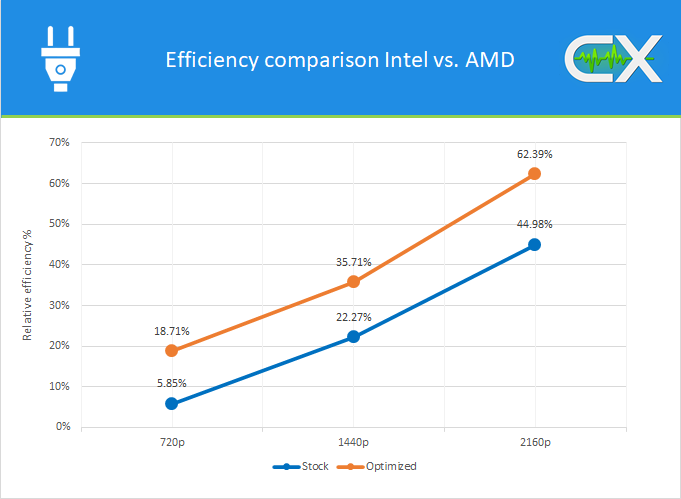

Efficiency gains across all resolutions

The graphic shows the achieved efficiency increases in % over all resolutions. The Intel system is always in the lead, but the overall improvements are also considerable. CPUs running at stock configurations have an enormous optimization potential.

Overall comparison Intel vs. AMD

What starts harmlessly at 5.85% under 720p and stock settings ends like a drumbeat at 2160p and the optimized profile. The Intel system runs over 60% more efficient in 2160p optimized. These ratios were ultimately quite surprising for us as well.

Update (11/01/21): Some more clarification on this graph as feedback came in from readers. The graph is to be understood in a way that the percentages indicate how efficient Intel is compared to AMD for all tested resolutions. Additionally, two profiles (stock and optimized) are compared, so the graph contains two curves.

Is 7nm actually more efficient than 14nm?

That depends. We only looked at gaming workloads in this test, which can only very rarely fully utilize CPUs. The picture would be different for applications that are heavily multithreaded. Nevertheless, AMD should improve this. The boost algorithm seems to be too aggressive in GPU-limited scenarios. The idle consumption is also clearly too high compared to Intel CPUs, which is probably due to the fact that the I/O die has no P-states.

However, it is surprising that the i9-10900K is so much more efficient in games in the end. Consequently, this means that the manufacturing process alone is not decisive. The architecture and an intelligent management is at least very important as well.